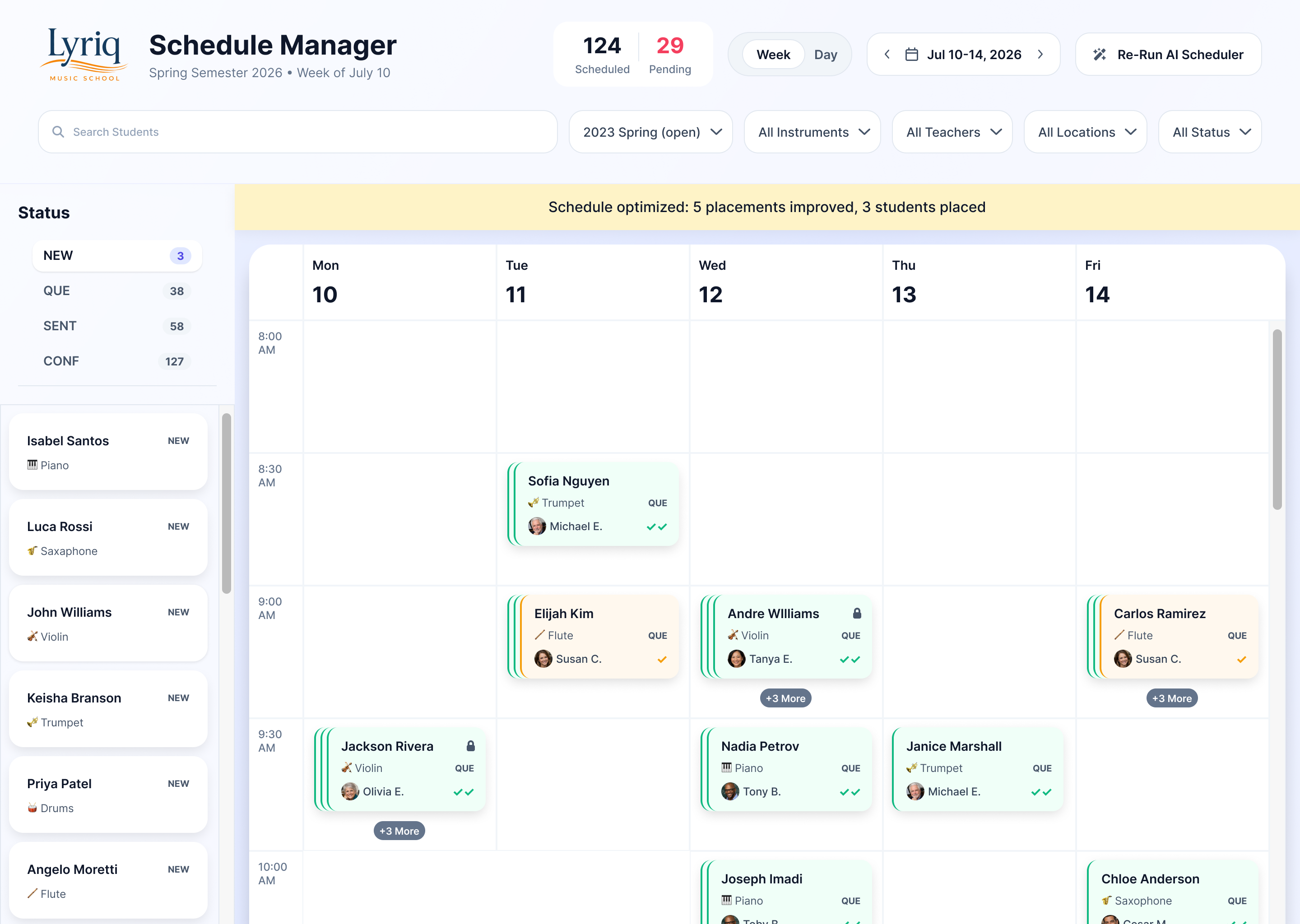

Lyriq schedules more than 300 students across multiple teachers, instruments, locations, and family constraints. I designed a hybrid AI-assisted system that generates an initial schedule, scores placement quality, and allows administrators to review, adjust, and finalize the result.

Lyriq operates more like a university than a typical music school. Students don’t enroll directly into lesson slots. Instead they submit preferences: instrument, teacher, location, and availability.

Administrators then build the actual schedule from those preferences while balancing teacher availability, room locations, and cases where siblings need to be scheduled together.

With more than 300 students and dozens of overlapping constraints, assembling that schedule manually could take weeks.

Lyriq students could only submit preferences. The system had to turn those preferences into an actual schedule.

Matches preferences against real-world constraints

AI-generated first pass, reviewed and finalized by administrators

Constraint system balancing student preferences, teacher availability, locations, and sibling coordination.

.png)

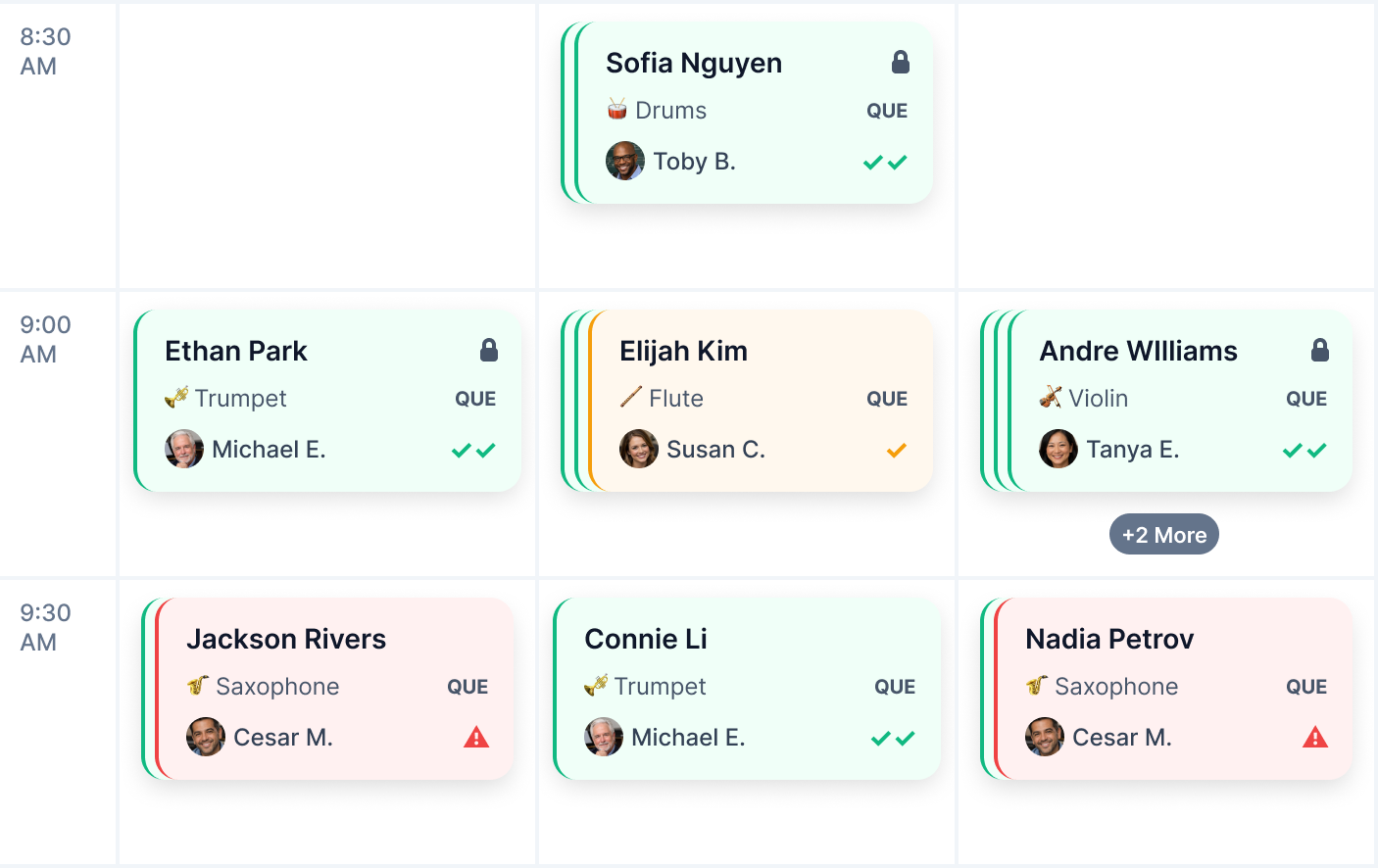

AI-generated schedule showing placement confidence and student conflicts

An algorithm can generate a schedule quickly, but administrators still need to understand the decisions it makes. A system that simply outputs a final schedule creates a new problem: no visibility into why students were placed where they were.

Instead of fully automating the process, I designed a hybrid workflow. The AI generates the initial schedule, evaluates placement quality, and highlights compromises.

Administrators then review the results, adjust edge cases, and approve placements before schedules are sent to teachers.

The system generates the first pass automatically, but administrators review and adjust placements before schedules are confirmed.

AI-generated scheduling followed by review, locking, and iterative re-optimization.

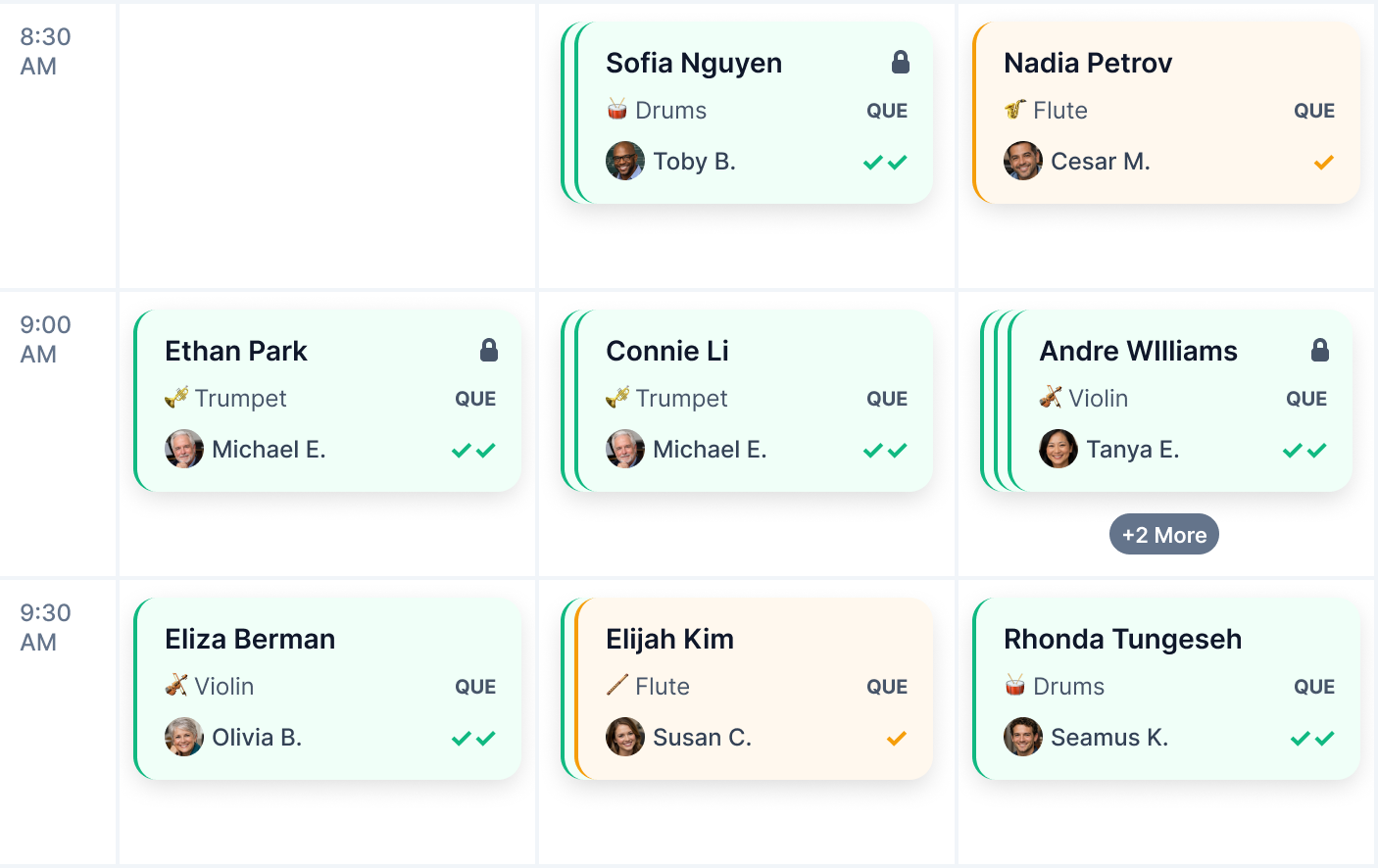

The AI could place hundreds of students at once. Administrators needed to understand the quality of those placements immediately.

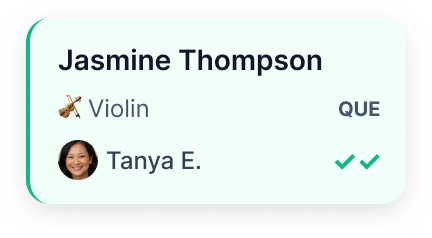

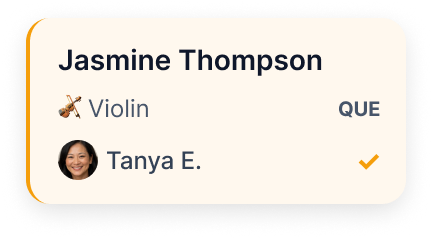

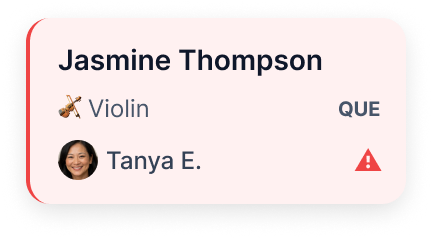

Instead of coloring cards by teacher or instrument, I used confidence-based color coding: green for strong matches, yellow for acceptable placements, and red for compromises.

That made the schedule readable at a glance and directed attention exactly where human judgment was needed.

Instead of hiding scheduling compromises, the system surfaces them visually so administrators can focus attention where it’s needed.

Most of the schedule often worked well. The problem was the handful of compromised placements.

I introduced lock controls so administrators could preserve strong placements before re-running optimization on the rest.

That let the system improve problem areas without undoing the progress already made.

↓→

Locked placements remain unchanged while the AI improves unresolved conflicts.

The AI handled the heavy scheduling logic across hundreds of constraints. Administrators retained control over edge cases, conflicts, and final approval..

The system turned scheduling from a fragile manual task into a faster, iterative collaboration between automation and judgment.

AI-optimized schedule after administrator review and locked placements.

The next step would be deeper teacher comparison tools and availability overlays, allowing administrators to evaluate alternatives before committing to a compromise placement.

The core workflow already established the right balance. The next iteration would make that decision-making layer even more transparent.